1 Department of Anatomy, Biochemistry & Physiology, John A. Burns School of Medicine, University of Hawaii at Mānoa, Honolulu, USA

2 Department of Kinesiology and Rehabilitation Sciences, University of Hawaiʻi at Mānoa, Honolulu, USA

3 Department of Sports Science, Sendai University, Shibata District, Japan

4 UH/QMC MRI Research Center, John A. Burns School of Medicine, Honolulu, USA

5 Universitätsklinikum, Hals-Nasen-Ohren-Klinik, Heidelberg, Germany

6 Department of Anatomy, College of Medicine, Catholic University of Korea, South Korea

7 Department of Anatomy and Cell Biology, University of Heidelberg, Heidelberg, Germany

Collaborative online anatomy education has become increasingly utilized due to a trend toward student-centered independent learning, as well as the ongoing COVID-19 pandemic limiting in-person group activities. Anatomy education is heavily reliant on visuotactile experience and presents a challenge during online instruction. In an effort to provide an effective experience, the Head and Neck block (Fall Semester, 2020) was presented as an online activity that included extended reality anatomy models derived from three-dimensional medical illustrations (artistic), dissections, plastinates, and segmentations, and posted on the Sketchfab platform for student viewing. The purpose of this study was to assess student preference of anatomical models during online anatomy instruction. A photogrammetry workflow was developed to digitize the dissected and plastinated specimens that were posted to the sketchfab.com platform and presented via a secured university-based website hosting service (xrcore.jabsom.hawaii.edu). Segmented models were derived from MRI cadaveric scans, and artistic models were created based on segmented graphics primitives that are defined as nondivisible graphical elements, such as planes or spheres, for input or output within a computer-graphics system. Technical requirements were minimal and relied on several open-source or limited subscription software packages. Accession was recorded and compared using a chi-square analysis. A comparison of the preference of the models was conducted using student surveys (n = 79). When compared to all learning resources, actual dissections were most preferred (34.1%). However, plastinated models were considered most/more preferred (54.3%) compared to other assets suggesting a broader preference as a learning resource. These results suggest that plastinated models are effective and engaging tools for the instruction of gross anatomy for medical students.

anatomy education; photogrammetry; plastination; workflow; XR visualization

Ms. Kumiko Hashida, Department of Kinesiology and Rehabilitation Sciences, University of Hawaiʻi at Mānoa, Honolulu, HI, 96822, USA, telephone +001 808 956 9057; hashidak@hawaii.edu

![]()

Collaborative online anatomy education has become increasingly utilized due to a trend toward student-centered independent learning as well as the ongoing coronavirus disease (COVID-19) pandemic limiting in-person group activities. However, anatomy education requires the tuition of three-dimensional spatial relationships which are difficult to convey online. Recent advances in computer technology have facilitated the presentation of complex anatomical arrangements on personal electronic devices, primarily using artistic renderings of anatomical features (Hong et al., 2015). These efforts have translated into improved anatomical educational efficiency (Blavier & Nyssen, 2009; Nakamatsu et al., 2022). Preliminary evaluations have reported trends towards improved knowledge and an improvement in clinical reasoning for students who use web-based modules (Kalet et al., 2007). A few other studies have reported a limited number of current online resources that offer a comprehensive, surgically relevant framework that could usefully complement practical tuition in anatomy (Choi et al., 2008; Iwanaga et al., 2021).

X-Reality (XR, extended reality including augmented and virtual reality) visualization is a generalized term applied to immersive technology that merges physical and virtual experiences. This technology is rapidly being applied in many different disciplines and technologies. The total spending on AR/VR (AR, augmented reality; VR, virtual reality) is estimated to reach as high as $215 billion by the end of 2021 (Chuah, 2019) and spending in healthcare applications is projected to reach $5.1 billion in 2025 (Elsayed et al., 2020). Application of XR reality is taking hold in medical and allied medical professions and it is important to expose students in health sciences to this technology (Wish-Baratz et al., 2019). Additionally, problem-based learning, and didactic information can be simultaneously depicted and presented to an individual or group (Caudell et al, 2003; Alverson et al., 2008). However, a major bottleneck in the process of creating instructional materials is data collection from actual anatomical structures, and generating computational assets remains problematic due to the time-consuming and laborious methods used to create content.

Photogrammetry provides a technique that could potentially accelerate the process of anatomical content production. Using this technique, multiple images are recorded and then stitched together to create a 3D mesh model to which surface shaders can be applied to achieve a realistic appearance. Data collection from plastinates could potentially accelerate the process of data collection while also providing actual spatial features of anatomical structures, thus preserving true anatomical variation, anomalies, and pathologies. These actual structures would provide more accurate information than artistic expressions that are typically utilized in online learning content. The development of a cost-effective and rapid deployment method for generating 3D anatomical content, based on plastinated structures, suitable for learning module content creation XR deployment remains a laudable goal. However, it remains to be determined whether plastinated anatomical specimens provide instructional assets that are perceived as useful in online instruction by students. The purpose of this study was to describe the usage of plastinated specimens in online medical student teaching, and to assess their perceived usefulness by medical students during the Head and Neck Gross Anatomy block (Fall, 2020).

Anatomical specimens utilized for this study were drawn from permanent donations, and all activities conformed to standard operating procedures of the Willed Body Program (WBP) at the John A. Burns School of Medicine, University of Hawaiʻi at Mānoa (Labrash & Lozanoff, 2007). This study falls under the purview of Approval #00120, approved by the ORC Human Studies Program, University of Hawaiʻi at Mānoa, Honolulu, HI. Models were used for online instruction covering the Head and Neck block (Fall, 2020) during the COVID-19 pandemic, since in-person activities including large group laboratory dissection was canceled. Rather than providing didactic lectures, the anatomy faculty focused on online laboratory experiences including case-based dissections using MRI and scanned cadavers typically used in the in-person dissection activity (Nakamatsu et al, 2022). Presentations included live dissections as well as online models posted to Sketchfab derived from a) artistic renderings, b) plastinates, c) prosections, and d) CT/MRI segmented models.

a) Artistic models

Artistic models were created using a skeleton as the base feature. The skeleton was created by scanning individual bones either through 3D laser scanning or segmented models. These scans determine the locations of a vast number of 3D points and their spatial information. These artistic “primitives” existed as .obj files and were imported into Autodesk Maya (Autodesk Inc, San Rafael, California, USA), a 3D animation and modeling application. A form of a Maya-embedded language (MEL) script was developed to import characters from a scanned .obj mesh. Individual bones were edited based on vertex geometry, smoothed, articulated electronically, and retopologized and smoothed using a spline graphics function. Soft tissues were sculpted and detailed using vertex manipulations. The resulting structures were fit to contiguous morphological features based on qualitative observations taken directly from the cadaver. Six operations (push, pull, smooth, relax, pinch, and erase) were used to smooth and texture the model surface. Any further problematic geometry was corrected manually or by using a clean-up tool within the software.

b) Plastinated specimens

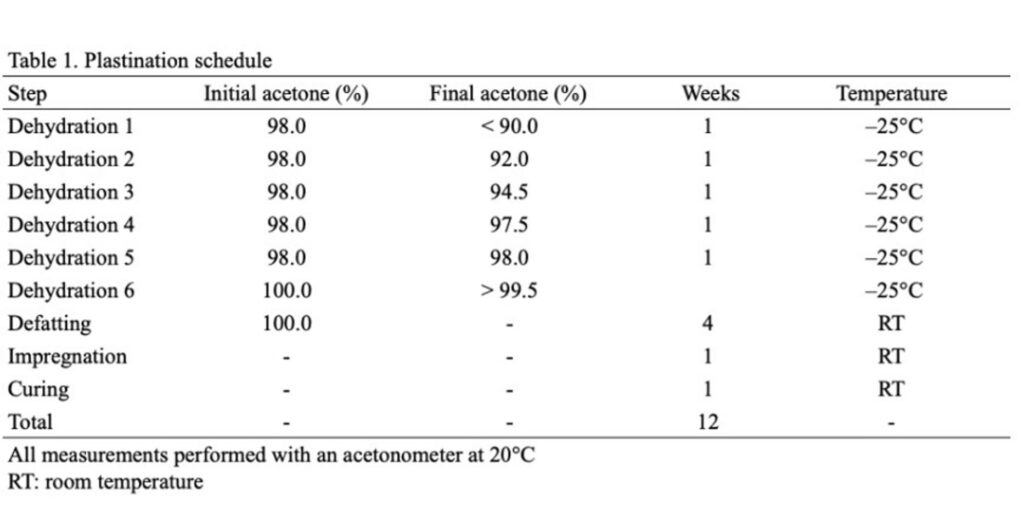

Head and neck specimens were obtained following routine cadaveric dissection and subjected to NCS-PR10 plastinates (Table 1). Briefly, formalin-fixed specimens were dissected free from surrounding tissue, removed, and washed (12 hours), and then subjected to room temperature (RT) plastinates utilizing the routine steps of dehydration, defatting, forced impregnation, and curing (Raoof et al., 2007). Specimens were placed in a chemically resistant bucket with a sealable lid (-25 °C, 10:1 ratio of 98% acetone) and placed in an explosion-proof freezer (Lab-Line Frigid Cab, HI). Specimens were immersed sequentially in fresh absolute acetone (-25 °C) until a concentration of 99.5% was achieved, at which point specimens were considered adequately dehydrated. Defatting (degreasing) was performed by warming the specimens to room temperature followed by sequential immersions in 2-3 fresh acetone baths (100%) until clear (99.9%). The dehydrated/degreased specimens were then submerged into a PR10 impregnation solution, PR10 polymer (Biodur Products GmbH, Heidelberg, Germany)/Cr20 crosslinker at a concentration of 100:8 and placed in a medium sized vacuum chamber attached to an oil-free two-stage vacuum pump (Labport, KNF, Neuberger) at 22 °C (RT). Forced impregnation was achieved by applying vacuum pressure (approximately 350 mmHg) and continued until bubble release ceased (4 days). Specimens were blotted dry and wrapped to achieve further drying (4 days) followed by being lightly coated with Ct32 crosslinker, and then wrapped and placed in an airtight plastic container to achieve additional curing.

Table 1. Plastination schedule

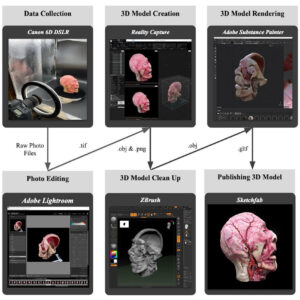

Figure 1. The 6-step workflow for model creation, visualization, and distribution for plastinated and prosection specimens and associated file types after each step

The 3D models of plastinated head and neck specimens were created using a six-step process: data collection, photo editing, model creation, model clean up, model rendering, and publishing models (Fig. 1). First, plastinated head and neck specimens were photographed utilizing a full frame sensor Canon 6D DSLR (Canon U.S.A., Inc. Melville, NY. USA). A fixed 50 mm lens and variable 24 mm-105mm lens were used depending on the size of the specimen and its ability to focus. Additionally, polarizing filters on the lens and non-reflective film placed on the flash were used to minimize reflectance from surfaces. In addition to these camera accessories, a black background was used as well as softbox lights that minimized harsh shadows and delivered even lighting. Individual specimens were placed on a turntable composed of 3D printed parts, an Arduino Programmable Microcontroller (Arduino.cc. Somerville, MA, USA), and an EasyDriver - Stepper Motor Driver (SparkFun.Niwot, CL, USA). Specimens were rotated for a full 360° revolution on the platform (10° per rotation) with a ground signal sent to the mounted camera shutter to automate the process and eliminate external disturbances during photo-capture. A total of thirty-seven photographs were captured per revolution and this process was repeated three times with the tripod-mounted camera positioned at three different height levels to maximize surface capture and ensure retention of important landmarks and textures.

All photographs were reviewed with Adobe Lightroom (Adobe Inc., Mountain view, California, USA) and adjusted to ensure maximum quality. Adjustments to white balance as well as changes in color correction, shadows/highlights, contrast, and exposure were performed to ensure clarity of the specimen. The corrected photographs were exported as Tagged Image Files (TIF) to the model-generating software called RealityCapture (Capturing Reality s.r.o., Drienova 3, 821 01 Bratislava, Slovakia) that uses camera specifications and photograph alignments to construct point clouds representing the anatomical structure. A mesh is formed by connecting the points in the cloud and colors are assigned to these vertex points. The high poly-mesh with the high-resolution texture mesh was optimized with ZBrush (Pixologic Inc. Los Angeles, California USA) to remove errors on the surface. A low polygon version intended for real time rendering was created with Substance Painter (Adobe Inc., Mountain view, California, USA). These data were used by the real time render engine to simulate surface lighting and optimally visualize that anatomical structure.

c) Prosections

Head and neck prosections were performed highlighting relevant anatomy for the corresponding lab. Following dissection, the specimen was subjected to the same workflow as the plastinated specimens (as above) to obtain XR models and subsequently posted to Sketchfab.

d) CT/MRI segmented images

For vascular system visualizations, whole body cadaver CT scans were performed on an institution-owned 16-line CT scanner (Toshiba Aquillion 16, Toshiba Medical Systems Manufacturing Asia Sdn. Bhd, Penang, Malaysia) from January 2013 to December 2018, using the previously reported standardized protocol (Paech et al., 2018): head/neck (120 kV, 170 mAs, 0.5 mm slice thickness, increment 0.1 mm, kernel sharp, window brain). For contrast-enhanced postmortem computed tomography (CEPMCT), the applied contrast agent Angiofil® (Fumedica AG, Muri, Switzerland) consisted of iodized linseed oil and has been developed especially for postmortem usage (Grabherr et al., 2011); 220 ml of contrast media were diluted with 3.5 l of paraffin oil. The contrast agent was injected into the external carotid artery using a pneumatically-driven pump [DRV1; Hellwig Individuelle Systemlösungen (HeInSys), Dautpethal, Germany], (1200 ml + 1800 ml = 3000 ml). Three CT scans were acquired per body donor prior to the embalming procedure: the first acquisition was carried out before contrast agent administration (‘non-enhanced’) and the second scan was performed after intra-arterial contrast agent injection (‘arterial phase’). The approach has been shown to enable good vascular filling, especially of the arterial system, including precerebral and intracerebral arteries (Paech et al., 2018). Besides contrast-enhancement of the vascular system, also the attenuation of different tissues markedly improves at CEPMCT.

Additional MR scanning of cadavers was used to generate segmented models. For these specimens, a workflow was used following (Nakamatsu et al, 2022). Briefly, body donations were obtained through the WBP at John A. Burns School of Medicine (JABSOM) and processed routinely for embalming and preservation. The donors were transported to the MRI facility and scanned with a Siemens Prisma 3T MRI scanner (Siemens Medical Solutions USA, Inc., Malvern, PA, USA). Scan specifications consisted of: 1) Turbo Spin Echo sequence (T2w): transversal head images of 0.5x0.5x1 mm3 resolution were obtained in two segments (matrix size 512x512x128 each) using a FOV of 240 mm, 128 slices, 150deg flip angle, TR/TE 13750/97 ms, and a turbo factor of 17; 2) MPRAGE (T1w): sagittal head images of 0.5x0.5x0.1 mm3 resolution were obtained using a FOV of 240 mm, 192 slices per slab (3D), 9 deg flip angle, TR/TE/TI 2580/3.67/1300 ms, and a turbo factor of 256. Anonymized DICOM folders were uploaded to the Anatomy NAS storage system. Scans were transferred to Rad3d software (Radial3D Inc, Honolulu, Hawaii, USA) for assessment by a radiologist, who completed a limited report. Scans were analyzed using Mimics software (version 21.0, Materialise, Belgium) and segmented models were rendered.

Online presentation and model usage assessment

Second-year medical students (Fall, 2020) were responsible for viewing an online dissection guide created specifically for the Head and Neck course using WordPress and posted to a secured JABSOM server prior to the live-stream dissection. The guide described the online Head and Neck dissections and provided links to the four types of models posted on Sketchfab. Streaming presentations included the actual cadaver-based dissection performed in real-time by instructors. The sessions were supplemented with specific anatomical demonstrations using the four model types also presented during the online dissections by the instructors to illustrate specific points of anatomical relevance.

During the validation process, it was shown that two of the model types, i.e., artistic and segmentations, could be easily identified based on appearance. The appearance of plastinated and prosection models was easily identified compared to both artistic and segmented models, but not easily differentiated from one another. Therefore, plastinates and prosections were grouped into one category called ‘dissections models’ for the purpose of student selection during the survey. Accession numbers were then determined using the WordPress Exact Metrics application and the Sketchfab counter to quantitatively compare the number of ‘hits’ for each group of models. Thus, the comparison of plastinated and prosected models was retrospective and also objective, since the students were ‘blinded’ to the origin of the models derived directly from cadaveric dissections. Viewing preference was determined by tabulating accession ‘hits’ for each model type and comparing them for significant differences using a chi-square analysis where p < .01 was considered significant.

Survey Instrument

At the end of the head/neck unit, a questionnaire was presented to the students, which had been previously validated with a small sample of medical students not included in the study cohort, as well as one expert anatomist. Students were asked to rank their perceived effectiveness of the actual dissection as well as the model assets posted to Sketchfab. The rank was exclusion-based and placed on a scale of 1 (most preferred) – 5 (least preferred). An overall agreement (OA) percentage was used in this study and defined as the number of students who partially or fully agreed per question, divided by the total number of students who answered the corresponding question. Additionally, students were given the opportunity to provide open-ended comments.

Workflow

The workflow, comprising a six-step process, is shown in Figure 1. The time required for this process depends on the size and complexity of the model as well as the experience of the operator. For the scanning in Figure 1, Data Collection required approximately 10 minutes; Photo Editing 5 minutes; Model Creation 2 hours; Model Clean Up 1 hour; Model Rendering 15 minutes, and Model Publishing 5 minutes.

Models

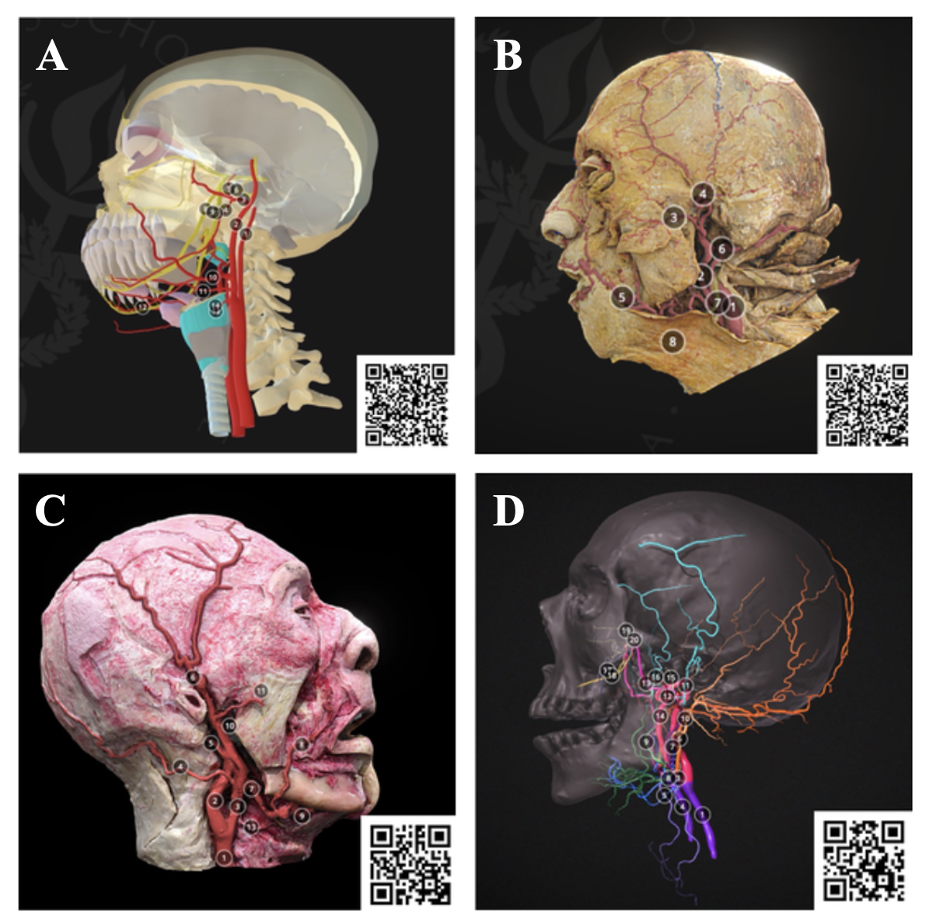

Figure 2. Examples of models focusing on the external carotid artery used in the Head and Neck Dissection modules. A) Artistic, B) Prosection, C) Plastinates, D) Segmentation.

Comparisons of artistic, dissection, plastinates, and segmentation of the carotid system is shown in Figure 2 and depicted on Sketchfab. Structures identified included: common carotid artery, internal carotid artery, external carotid artery, occipital artery, posterior auricular artery, superficial temporal artery, facial artery, lingual artery, maxillary artery, transverse facial artery, and superior thyroid artery. The artistic model (Fig. 2A) presented the branches of the external carotid artery schematically. In this model, annotated structures including the internal carotid artery, external carotid artery, maxillary artery, inferior alveolar artery, middle meningeal artery, auriculotemporal nerve, inferior alveolar nerve, lingual nerve, chorda tympani were labeled. The artistic renderings are very discrete with structures color-differentiated and highly stylized, enabling ease-of-use for the viewer. The dissection model (Fig. 2B) is latex injected facilitating structural differentiation; however, the dissected structures are less differentiated than the artistic models. The plastinates generally have better structural definition and can be color enhanced achieving the necessary specificity for instructional purposes (Fig. 2C). The segmented models are highly stylized but can be annotated to achieve a high degree of feature specification (Fig. 2D).

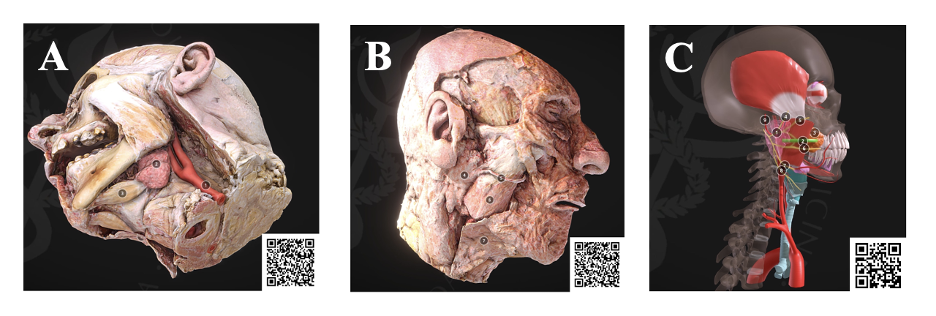

Figure 3. The workflow enables additional features to be modified on the plastinates (A, B), such as the salivary glands, to emphasize relevant anatomy with supplemental understanding using artistic models (C)

As another example, the submandibular triangle and parotid region were displayed in comparison to the same area seen in the streaming dissection as well as for comparison in spatial relationship to the carotid system. These models were used to display the submandibular gland as well as the anterior belly of the digastric muscle (Fig. 3A). On the contralateral side, the overlying structures including the parotid gland, parotid duct, masseter muscle, and platysma muscle could be viewed (Fig. 3B). Additional artistic renderings were used as references for the plastinates (Fig. 3C).

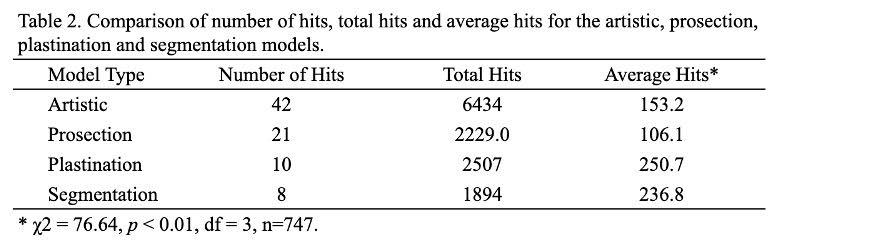

The online presentation of Head and Neck anatomy comprised 9 sessions. The models were presented during the online streaming dissection as well as in written form through a website set up on the JABSOM server (xrcore.jabsom.hawaii.edu). A total of 81 models were accessed for all of the 9 sessions, including 42 artistic, 21 prosection, 10 plastinated, and 8 segmentation models (Table 2). A total of 13,064 views were recorded. The most frequently accessed models were Artistic (6434) followed by Plastinates (2229), Prosections (2507), and Segmentations (1894). Average hits were most frequent for Plastinated models (250.7) followed by Segmentation (236.8), Artistic (153.2), and Prosections (106.1) models. Chi-square analysis indicated that there was a significant difference between the types of models and accession ‘hits’ (χ2 (3) = 76.64, p < 0.01) with plastinates showing the highest frequency of hits (Table 2).

Table 2. Comparison of number of hits, total hits, and average hits for the artistic, prosection, plastination, and segmentation models

Survey

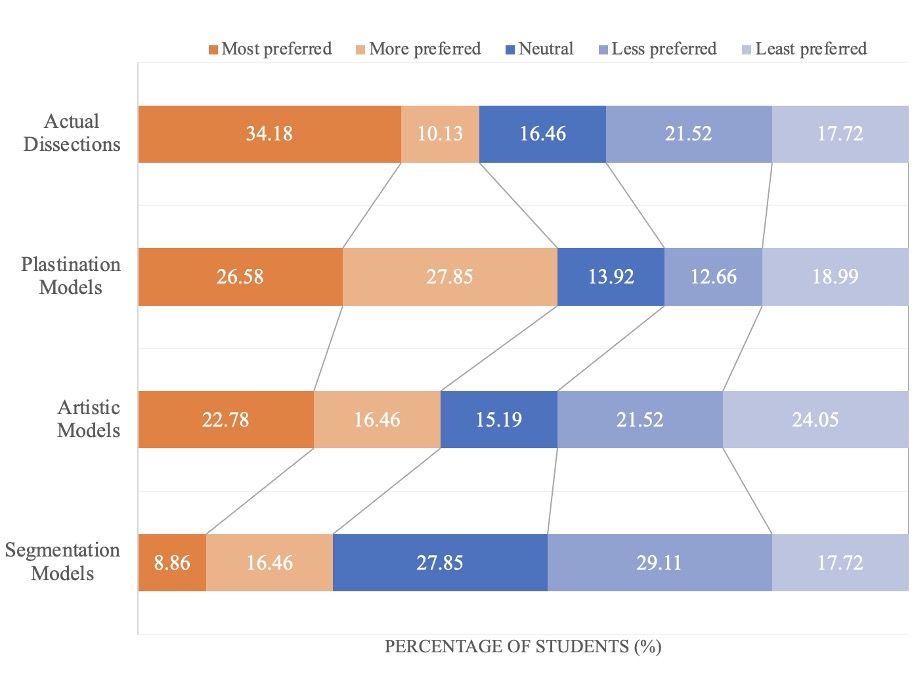

The 79 second-year medical students completed the survey regarding their preference among learning resources that are actual dissection, dissection models (prosections & plastinates), artistic models, CT/MRI segmented models, as well as the “other assets” that included an array of materials such as 2D pictures, radiological specimens, and CT/X-rays (Fig. 4). A total of 34.2% of students selected actual dissection as the most preferred learning tool among other assets of resources (Fig. 4), followed by dissection models (26.6%), artistic models (22.8%), and finally segmentations (8.9%). However, dissection models showed the largest OA (54.4%), followed by actual dissections (44.3%), artistic models (39.2%), and segmentations (25.3%). Thus, Sketchfab dissection models were the most valued assets and, within this category, plastinated models were preferred over prosections (Fig. 4).

Figure 4. Medical students’ preference on online anatomy educational assets. Although streaming online dissections were the most preferred (34.2%), the overall agreement (OA) for Sketchfab dissections was the greatest (54.3%) considered when combining most and more preferred

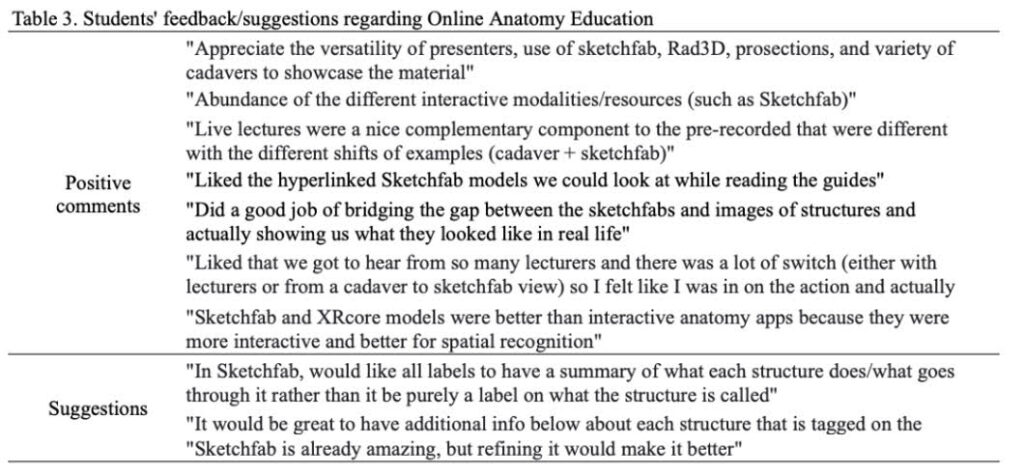

Responses to opinion-based questions concerning online anatomy instruction were tabulated, and representative comments are provided in Table 3. Most of the responses were positive, including that live presentations enabled students to stay engaged with the content and provided better spatial recognition compared with online anatomy apps. Students mentioned that alternating between the cadaver and model types in order to explain morphological spatial relationships facilitated their understanding. Students also stated that an abundance of different resources and hyperlinks of online models were helpful to better understand and learn lecture content. There were also some suggestions for future improvement. For example, some students suggested including physiological information along with the models, and providing more annotations.

Table 3. Students’ feedback/suggestions regarding Online Anatomy Education

Plastination is a unique anatomical preservation technique developed by von Hagens et al. (1987) and has been utilized in a broad variety of educational settings. The technique is characterized by replacing water and lipids in the tissues with curable polymers resulting in dry, odorless, and durable preservation. Although widely applied it still seems to be under-appreciated in various educational venues (Azu et al., 2021). Ethical issues have emerged because of commercial applications (Jones, 2002; Jones and Whitaker, 2009). This has been compounded by online presentations where patient identity could potentially be identified. However, current systems are being developed and implemented to ensure the anonymity of online cases using sophisticated digital recognition technologies to de-face MR and CT scans (Schwarz et al., 2021). It is expected that facial de-identification systems will soon be readily available that can be applied to plastinates, rendering them suitable for posting on public platforms.

Photogrammetry provides a novel method to record three-dimensional representations of real-life objects. Multiple photos are composited to provide a resultant 3D surface mesh representing the object. The composited 3D mesh can then be rendered, digitally annotated, and distributed to provide real-time instructional interaction online. However, photogrammetry has limitations when heavily textured or occluded surfaces are encountered, since the visual path of the camera becomes obstructed, leading to data loss in specific regions of the morphological feature. New tracking methods can be used as a computer vision target for recognition of the real-life object, improving surface detection. The data can also be optimized for 3D printing at scale and can serve as a physical model. There is significant educational potential in combining photogrammetry models with segmented and volumetric data from medical scans such as CT and MRI (Nakamatsu et al., 2022). This allows for internal structures to be viewed in the mesh, as well as external surface qualities and diffuse color information (Chang et al., 2016). Combining photogrammetry models with segmented and volumetric data derived from medical scans would provide significant potential, since internal structures can be viewed in the mesh as well as external surface qualities and diffuse color information. This approach also serves to make a record of unique pathological structures that are temporary donations scheduled for cremation.

A previous method involving digitization of plastinated specimens relied on using hand-held scanners to digitize the surface (Tunali et al., 2011). Although this method produced useful models, the scanning procedure relied on expensive technology that is no longer easily available. Additional approaches have used sequential sections of plastinated specimens (Lozanoff et al., 2003; Doll et al., 2004; Sora et al., 2007). These approaches are particularly effective using P40 methodology, however, alignment challenges require significant user input. Cone-Beam Computed Tomography systems (CBCT) and CT methodologies have been utilized to generate data sets and have provided useful output (Chang et al., 2016; da Silva et al., 2020). However, CBCT and CT specimen scanning require recurring financial expenditures. The photogrammetry approach helps to avoid costly investments and can effectively serve the data collection step within the workflow.

A variety of anatomical models and resources should be used including but not limited to cadaver dissections, plastinates, prosections, artistic models, segmentation models, and/or 2D imaging as each model has advantages and disadvantages. One of the advantages of cadaver dissection is the engagement of multiple sensors through palpation. It encourages a better understanding of spatial relationships (Rizzolo and Stewart, 2006; DeHoff et al., 2011). However, the availability of cadavers has been decreasing, and cost and spatial requirements have been issues. The benefits of online models are that students can access the models anywhere and models can be kept. Palpation is not available, and students are unable to practice dissection are some of the disadvantages of using online models. This evidence indicates that using a variety of models will increase the comprehension and understanding of anatomy.

The value of cadaver dissection in learning anatomy has been discussed (Flack and Nicholson, 2018; McMenamin et al., 2018). The study by Mathiowetz et al. (2016) reported that the group that used gross anatomy laboratory had a significantly higher grade percentage, self-perceived learning, and satisfaction, than the group that used online anatomy software. On the other hand, online and remote learning methods are perceived by students as highly convenient for the study and analysis of anatomical structures (O’Byrne et al., 2008; Kuyatt and Baker, 2014). Interactive online resources can be useful for deep understand of anatomy. Rather than choosing between gross anatomy dissection and online models, using multiple technologies should enhance existing teaching practices rather than replace them.

Our experience during the COVID-19 pandemic allowed us to test the value of dissection and online streaming dissection using a variety of models by comparing student’s preferences. During instances where physical interaction is limited, such as the COVID-19 pandemic, remote access to virtual models encourages collaboration and discussion at a safe distance. A variety of virtual platforms allow for collaboration and visualization of structures on numerous electronic devices (Hong et al., 2015). Rad3D is a Digital Imaging and Communications in Medicine (DICOM) viewer that can be accessed on any mobile device. Sketchfab is also a virtual platform that is emerging as a hub for sharing 3D models. Online platforms allow for more accessibility to information by a broader range of audiences. The potential acquisition of a Creative Commons license can provide free non-commercial access and also provides an outlet that is easily available to users. The application of XR augmented technology can also be applied as a non-destructive means to utilize museum specimens, creating large samples for conveying unique and novel anatomical information (Nisiotis et al., 2019; Sugiura et al., 2020; Mikami et al., 2022). This provides a unique opportunity for collaborative research and examination of potentially large collections of anatomical plastinates. Future work will be directed at creating freely available, online anatomical libraries based on plastinated specimens.

Museum specimens are particularly attractive for 3D imaging and various workflows have been described to generate 3D models previously (Chhem et al., 2006; Jutras 2010; Tomaka et al., 2009; Jocks et al., 2015). Museum specimens are of interest due to their age, historical relevance, and uniqueness (Marreez et al., 2010), while also posing obstacles since they are fragile and accessible only to individuals who gain access to their locale. Plastinates are being used more frequently in museum displays, and online presentations would permit the complete visualization of structures from various perspectives and depths, as well as enable access to the structure by a broad range of audiences. Future work will be directed at applying this workflow to old and rare museum specimens for online display.

As a whole, online learning is perceived by students as highly convenient for the study and analysis of anatomical structures. A variety of virtual models including dissection and plastinated models, artistic models, and CT/MRI segmented models allow for collaboration and visualization of structures. The plastinated models enrich the students’ anatomy learning experiences by enabling viewing from all angles and allowing accurate spatial relationships of structures. Plastinated models also show true anatomical variation, disease, and anomalies which might have helped students in the clinical application aspect. Future work will be directed at creating freely available, online anatomical libraries and expanding the context and interactivity, including case studies and VR for the immersive experience of the 3D structures.

Acknowledgments

We would like to thank Professor Brittany Biggs (Academy of Creative Media, University of Hawaii at Manoa) and media interns, Sylvia Lee, Ross Turner, Troy Macris, and Isaiah Sanchez for assistance in model development and editing.

The authors sincerely thank those who donated their bodies to science so that this research could be performed. These donors and their families deserve our highest gratitude.

lverson DC, Saiki SM Jr, Kalishman S, Lindberg M, Mennin S, Mines J, Serna L, Summers K, Jacobs J, Lozanoff S, Lozanoff B, Saland L, Mitchell S, Umland B, Greene G, Buchanan HS, Keep M, Wilks D, Wax DS, Coulter R, Goldsmith TE, Caudell TP. 2008: Medical students learn over distance using virtual reality simulation. Simul Healthc 3: 10-15.

https://doi.org/10.1097/SIH.0b013e31815f0d51

Azu OO, Naidu ECS, Trinity M, Naidu JS. 2021: The use of plastinated specimens in anatomical education in the University of Kwazulu-Natal: Need for advocacy. J Plast 33: 4-12.

https://doi.org/10.56507/EICY3880

Blavier A, Nyssen AS. 2009: Influence of 2D and 3D view on performance and time estimation in minimal invasive surgery. Ergonomics 52: 1342-1349.

https://doi.org/10.1080/00140130903137277

Caudell TP, Summers J, Holten, J IV, Hakamata T, Mowafi M. Jacobs J, Lozanoff BK, Lozanoff S, Wilks D, Keep M, Saiki S, Alverson D. 2003: Virtual patient simulator for distributed collaborative medical education. Anat Rec 270: 23-29.

https://doi.org/10.1002/ar.b.10007

Chang CW, Atkinson G, Gandhi N, Farrell ML, Labrash S, Smith AB, Norton NS, Matsui T, Lozanoff S. 2016: Cone beam computed tomography of plastinated hearts for instruction of radiological anatomy. Surg Radiol 38: 843-853.

https://doi.org/10.1007/s00276-016-1645-6

Choi A, Tamblyn R, Stringer M. 2008: Electronic resources for surgical anatomy. ANZ J Surg, 78: 1082-1091.

https://doi.org/10.1111/j.1445-2197.2008.04755.x

Chhem RK, Woo JKH, Pakkiri P, Stewart E, Romagnoli C, Garcia B. 2006: CT imaging of wet specimens form a pathology museum: How to build a "virtual museum" for radiopathological correlation teaching. HOMO - J Comp Hum Bio 57: 201-208.

https://doi.org/10.1016/j.jchb.2006.03.003

Chuah SH-W. 2019: Wearable XR-technology: literature review, conceptual framework and future research directions. Int J Technol 13: 205-259.

https://doi.org/10.1504/IJTMKT.2019.104586

da Silva AF, Cerqueira P, Baptista CAC. 2020: 3D reconstruction of silicone (S10 Biodur) Plastinated specimens using computed tomography scanning. J Plast 32: 18-25.

https://doi.org/10.56507/HPHJ5119

Doll F, Doll S, Kuroyama M, Sora MC, Neufeld E, Lozanoff S. 2004: Computerized reconstruction of a plastinated human kidney using serial tissue sections. J Plast 19: 12-19.

https://doi.org/10.56507/DCNL6651

DeHoff ME, Clark KL, Meganathan K. 2011: Learning outcomes and student-perceived value of clay modeling and cat dissection in undergraduate human anatomy and physiology. Adv Physiol Educ 35: 68-75.

https://doi.org/10.1152/advan.00094.2010

Elsayed M, Kadom N, Ghobadi C, Strauss B, Al Dandan O, Aggarwal A, Anzai Y Griffith B, Lazarow F, Straus CM, Safda NM. 2020: Virtual and augmented reality: potential applications in radiology. Acta Radiol 61: 1258-1265.

https://doi.org/10.1177/0284185119897362

Flack NA, Nicholson HD. 2018: What do medical students learn from dissection? Anat Sci Educ 11: 325-335.

https://doi.org/10.1002/ase.1758

Grabherr S, Doenz F, Steger B, Dirnhofer R, Dominguez A, Sollberger B, Gygax E, Rizzo E, Chevallier C, Meuli R, Mangin P. 2011: Multi-phase post-mortem CT angiography: development of a standardized protocol. Int J Legal Med 125: 791-802.

https://doi.org/10.1007/s00414-010-0526-5

Hong TM, Bezard G, Lozanoff BK, Labrash S, Lozanoff S 2015: Presentation of anatomical variations using the Aurasma mobile app. Hawaii J Med Public Health 74: 16-21.

Iwanaga J, Kamura Y, Nishimura Y, Terada S, Kishimoto N, Tanaka T, Tubbs RS. 2021: A new option for education during surgical procedures and related clinical anatomy in a virtual reality workspace. Clin Anat 34: 496-503.

https://doi.org/10.1002/ca.23724

Jocks I, Livingston DL, Rea PM. 2015: An investigation to examine the most appropriate methodology to capture historical and modern preserved anatomical specimens for use in the digital age to improve access. Proceedings of the INTED2015 Conference, Madrid Spain, pp: 6377-6386. ISBN: 978-84-606=57637 478.

Jones DG. 2002: Re-inventing anatomy: the impact of plastination on how we see the human body. Clin Anat 15: 436-440.

https://doi.org/10.1002/ca.10040

Jones DG, Whitaker MI. 2009: Engaging with plastination and the Body Worlds phenomenon: a cultural and intellectual challenge for anatomists. Clin Anat 22: 770-776.

https://doi.org/10.1002/ca.20824

Jutras LC. 2010: Magnetic resonance of hearts in a jar: breathing new life into old pathological specimens. Cardiol Young 20: 275-283. doi:10.1017/S1047951109991521

https://doi.org/10.1017/S1047951109991521

Kalet AL, Coady SH, Hopkins MA, Hochberg MS, Riles TS. 2007: Preliminary evaluation of the Web Initiative for Surgical Education (WISE-MD). Am J Sur, 194: 89-93.

https://doi.org/10.1016/j.amjsurg.2006.12.035

Kuyatt BL, Baker JD. 2014: Human anatomy software use in traditional and online anatomy laboratory classes: Student-perceived learning benefits. Coll Teach 43: 14-19.

https://doi.org/10.2505/4/jcst14_043_05_14

Labrash S, Lozanoff S. 2007: Standards and procedures of the Willed Body Donation Program at the John A. Burns School of Medicine. Hawaii J Med Public Health 66: 74-75.

Lozanoff S, Lozanoff BK, Sora M-C, Rosenheimer J, Keep MF, Saland L, Tregear J, Jacobs J, Saiki S, Alverson D. 2003: Anatomy and the access grid: Exploiting plastinated brain sections 497 for use in distributed medical education. Anat Rec 270B: 30-37.

https://doi.org/10.1002/ar.b.10006

Marreez YM, Willems LN, Wells MR. 2010: The role of medical museums in contemporary medical education. Anat Sci Educ 3: 249-253.

https://doi.org/10.1002/ase.168

Mathiowetz V, Yu CH, Quake‐Rapp C. 2016: Comparison of a gross anatomy laboratory to online anatomy software for teaching anatomy. Anat Sci Educ 9: 52-59.

https://doi.org/10.1002/ase.1528

McMenamin PG, McLachlan J, Wilson A, McBride JM, Pickering J, Evans DJ, Winkelmann A. 2018: Do we really need cadavers anymore to learn anatomy in undergraduate medicine? Med Teach 40: 1020-1029.

https://doi.org/10.1080/0142159X.2018.1485884

Mikami B, Hynd T, Lee UY, DeMeo J, Thompson J, Sokiranski R, Doll S, Lozanoff S. 2022: Extended reality visualization of medical museum specimens: Online case presentation of conjoined twins curated by Dr. Jacob Henle between 1844-1852. Transl Res Anat (submitted).

https://doi.org/10.1016/j.tria.2022.100171

Nakamatsu N, Aytaç G, Mikami B, Thompson J, Davis M III, Rettenmeier C, Maziero D, Stenger VA, Labrash S, Lenze S, Torigoe T, Lozanoff BK, Kaya B, Smith A, Miles JD, Lee U-Y, Lozanoff S. 2022: Case-based radiological anatomy instruction using cadaveric MRI imaging and delivered with extended reality web technology. Eur J Radiol 146: 110043.

https://doi.org/10.1016/j.ejrad.2021.110043

Nisiotis L, Alboul L, Beer M. 2019: Virtual museums as a new type of cyber-physical-social system. In: De Paolis L., Bourdot P (eds) Augmented Reality, Virtual Reality, and Computer Graphics. AVR. Lecture notes in computer science, vol 11614, pp: 256-263.

https://doi.org/10.1007/978-3-030-25999-0_22

O'Byrne PJ, Patry A, Carnegie JA. 2008: The development of interactive online learning tools for the study of anatomy. Med Teach 30: 260-271.

https://doi.org/10.1080/01421590802232818

Paech D, Klopries K, Doll S, Nawrotzki R, Schlemmer HP, Giesel FL, Kuner T. 2018: Contrast-enhanced cadaver-specific computed tomography in gross anatomy teaching. Eur J Radiol, 28: 2838-2844.

https://doi.org/10.1007/s00330-017-5271-4

Raoof A, Henry RW, Reed RB. 2007: Silicone plastination of biological tissue: room temperature technique - Dow™/Corcoran technique and products. J Plast 22: 21-25.

https://doi.org/10.56507/AWAC9285

Rizzolo LJ, Stewart WB. 2006: Should we continue teaching anatomy by dissection when…? Anat Rec 289B: 215-218.

https://doi.org/10.1002/ar.b.20117

Schwarz CG, Kremers WK, Wiste HJ, Gunter JL, Vemuri P, Spychalla AJ, Kantarci K, Schultz AP, Sperling RA, Knopman DS, Petersen RC, Jack CR. 2021: Alzheimer's disease neuroimaging initiative. changing the face of neuroimaging research: comparing a new MRI de-facing technique with popular alternatives. Neuroimage 231.

https://doi.org/10.1016/j.neuroimage.2021.117845

Sora MC, Genser-Strobl B, Radu J, Lozanoff S. 2007: Three-dimensional reconstruction of the ankle by means of ultrathin slice plastination. Clin Anat 20: 196-200.

https://doi.org/10.1002/ca.20335

Sugiura A, Kitama T, Toyoura M and Mao X. 2020: The use of augmented reality technology in medical museums. In: Teaching anatomy a practical guide, Chan LK, Pawlina W (eds). Springer, CH, pages 337-347.

https://doi.org/10.1007/978-3-030-43283-6_34

Tomaka A, Luchowski L, Skabek K. 2009: From museum exhibits to 3D models. In: Cyran KA, Kozielski S, Peters JF, Stanczyk U, Wakulicz-Deja A (eds) Man-machine interactions. Adv Intell Syst 59: 477-486. Springer Berlin, Heidelberg.

https://doi.org/10.1007/978-3-642-00563-3_50

Tunali S, Kawamoto K, Farrell ML, Labrash S, Tamura K, Lozanoff S. 2011. Computerised 3-D anatomical modeling using plastinates: an example utilizing the human heart. Folia Morphol 70: 191-196.

von Hagens G, Tiedemann K, Kriz W. 1987: The current potential of plastination. Anat Embryol 175: 411-421.

https://doi.org/10.1007/BF00309677

Wish-Baratz S, Gubatina AP, Enterline R, Griswold MA. 2019: A new supplement to gross anatomy dissection: HoloAnatomy. Med Educ 53: 522-523.

https://doi.org/10.1111/medu.13845